We thought agentic AI would solve flashcard quality. Generate, review, update — the classic loop. It produced beautiful cards. Then our users told us the truth. Evals told us why.

Table of Contents

The agentic starting point

When we first built our AI flashcards generation pipeline, we reached for the pattern that felt most natural: generate-review-update. An LLM generates the initial flashcard set from a teacher’s curriculum document. A second pass reviews each card for accuracy, clarity, and pedagogical soundness. A third pass rewrites anything the reviewer flagged.

The results looked excellent. Cards were well-structured, answers were grounded in the source material, and learning objectives were cleanly mapped. Early pilot teachers loved them. We shipped it.

Then the feedback arrived

Within weeks, teachers started asking questions we didn’t expect:

“Why am I only getting 10 cards for a 24-page module?”

“This took two minutes to generate. Can it be faster?”

“The hint basically tells the student the answer.”

“This question is confusing — a student could answer three different things.”

We had optimized for what looked good in a demo. We hadn’t measured what actually worked in a classroom. We needed a systematic way to separate signal from noise — a way to know, with data, whether a change made things better or worse.

We needed evals.

Building our evaluation framework

We designed three evaluation suites, each testing a different dimension of flashcard quality:

- Ungrounded eval — 10 topic-based cases (Grade 6 fractions, Grade 10 Philippine heroes, college Python, etc.). No source document. Tests whether the model can generate structurally sound cards from its own knowledge.

- Grounded eval (no hints) — seven cases with real curriculum PDFs (arithmetic sequences, plate tectonics, Blue Ocean Strategy, corporate finance, etc.). Tests faithfulness to source material and pedagogical review quality.

- Grounded eval (with hints) — same seven cases, but the model must also generate two-level pedagogical hints per card. This is the hardest test — the one that exposed the biggest differences between models.

Each suite runs automated assertions (card count in range, no empty answers, valid learning objectives) plus LLM-as-judge evaluations that check for atomicity, faithfulness, hint quality, and prompt clarity. We track a composite review score (0 to 1) that captures the percentage of cards an expert reviewer would consider issue-free.

With this framework in place, every change to our pipeline became measurable. No more guessing.

Evals didn’t just measure our output. They changed how we think about generation itself.

The surprising cost of “review”

The first thing we tested was the pipeline we were already running in production. The review step was supposed to be our quality safety net. The eval data told a different story.

The review step was actively harming card quality.

When the reviewer flagged an issue and the updater attempted a correction, the “fix” frequently introduced new problems. A card that was slightly imprecise would get rewritten into something ambiguous. A simple fill-in-the-blank would get expanded into a multi-part question that violated the atomicity principle.

Prompt: “The formula aₙ = a₁ + (n-1)d is used to find the

{{blank}} term of an arithmetic sequence.”

Answer: “nth”

Bloom: understand

After review-update: the card was “improved” by expanding it to ask what each variable represents — turning a single-concept card into a four-part answer. The reviewer then flagged this as “not atomic.” The fix created the very problem it tried to solve.We saw this pattern repeatedly. The Blue Ocean Strategy case was especially bad — seven out of 18 cards were flagged after the review-update cycle, with issues ranging from multi-part answers to hints that contained logical errors.

And the cost wasn’t just quality. With the review-update loop, generating a single flashcard set took approximately 120 seconds — three sequential LLM calls, each waiting on the previous. For a teacher generating cards during a prep period, that’s a non-starter.

Cutting the loop: one-shot generation

The eval data pointed to a clear hypothesis: skip the review-update step entirely. Invest that effort into a better generation prompt instead. One call, one shot, better cards.

We restructured our prompt to encode the quality criteria that the reviewer had been checking — atomicity, no trick questions, concise answers for fill-in-the-blank cards, faithfulness to source material — directly into the generation instructions.

The results were immediate. One-shot generation with an improved prompt reached a review score above 0.93 on our best-performing model — compared to scores in the 0.64–0.85 range from the review-update pipeline. Generation time dropped from ~120 seconds to under 30. Fewer API calls meant lower cost per flashcard set.

Better quality. Faster. Cheaper. The eval data made the decision obvious.

The hint problem

With one-shot generation performing well on base flashcards, we turned to hints — the two-level scaffolding that helps students recall an answer without giving it away. Level 1 provides a contextual nudge. Level 2 offers a structural scaffold.

Generating good hints turned out to be the hardest part of the entire pipeline. The core failure mode: hints that reveal the answer.

Prompt: “An {{blank}} is a sequence in which each term after the

first is obtained by adding a constant...”

Answer: “arithmetic sequence”

Hint 1: “This sequence type shares its first word with the name

of a fundamental branch of mathematics...”

Hint 2: “The prefix ‘arithmet-’ comes from Greek...”

Both hints essentially give away the answer. A student reading

these doesn’t need to recall anything.Other failures included hints that restated the prompt, hints with factual errors (one claimed the painter known for water lilies was “Maurice” — it’s Claude Monet), and hints that claimed an answer “starts with the letter R” when the answer was “Hal Riney.”

When we added hint generation to the one-shot prompt, review scores dropped significantly for most models — from 0.944 to 0.642 for one model, a swing of over 30 percentage points.

Model comparison: four models, three evals

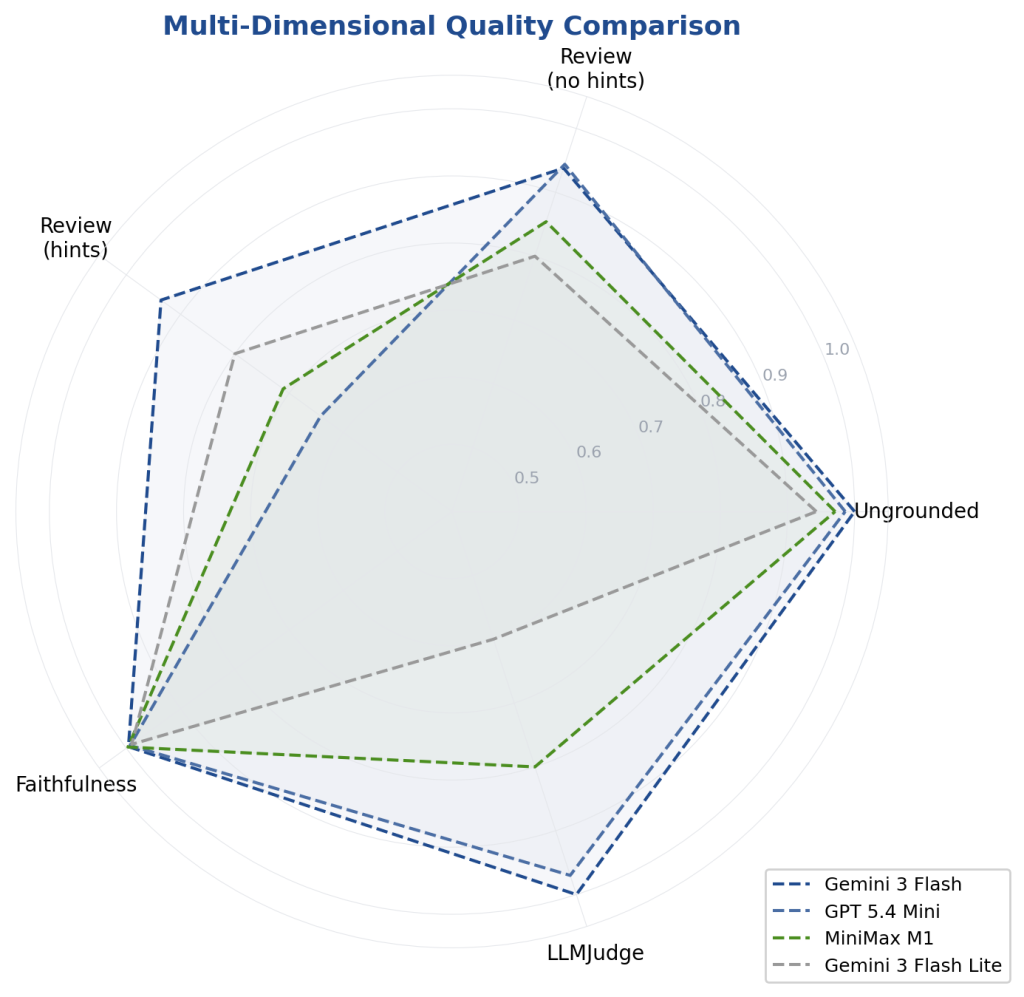

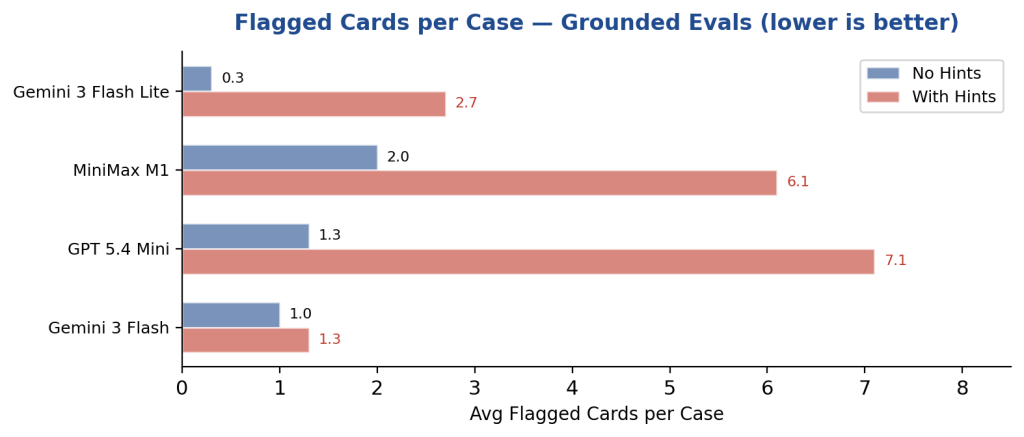

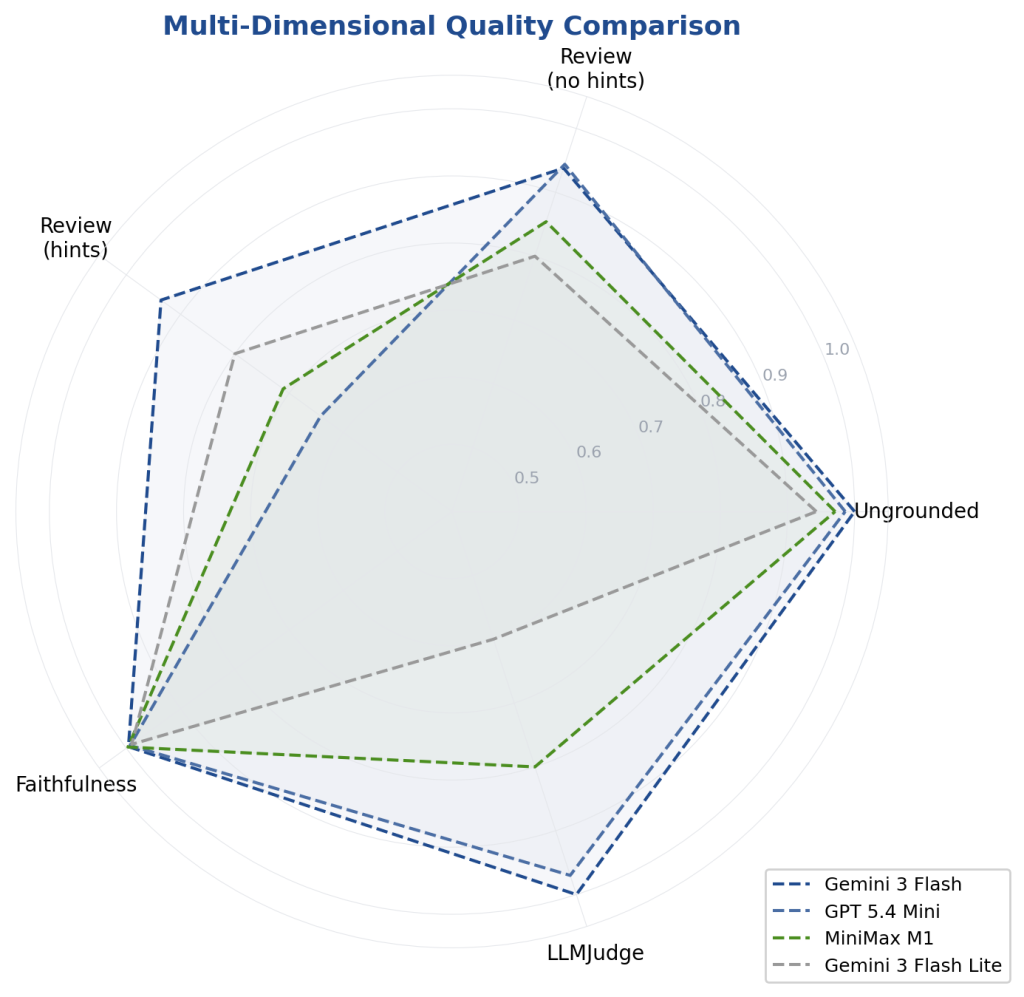

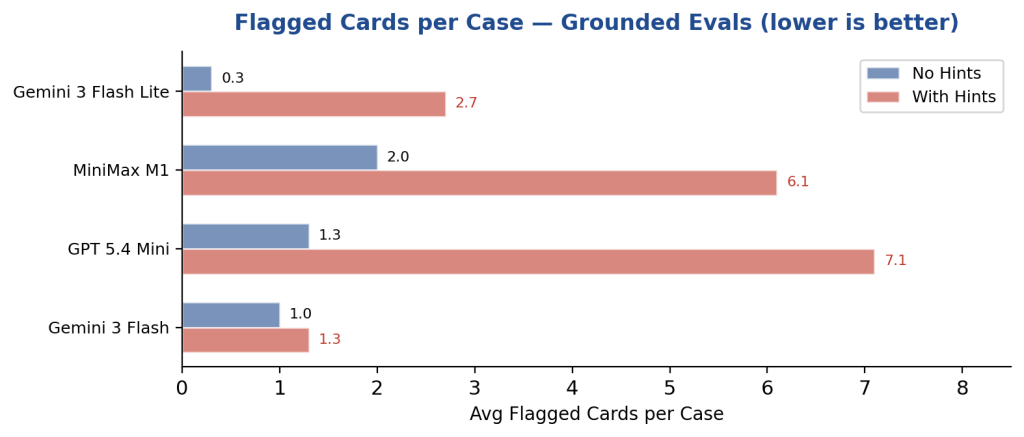

We benchmarked four models across all three eval suites: Gemini 3 Flash, GPT 5.4 Mini, MiniMax M1, and Gemini 3 Flash Lite. Each ran the same prompts on the same test cases.

Gemini 3 Flash: consistent quality at scale

Scored 1.000 on the ungrounded eval (perfect across all 10 cases), 0.938 on grounded without hints, and 0.936 with hints. Its quality barely moved when hints were added. It averaged just 1.3 flagged cards per case. The only model to pass the review quality gate on all seven grounded cases.

GPT 5.4 Mini: reliable format, fragile hints

The most structurally reliable model. Perfect card counts, perfect completeness. On grounded evals without hints: an excellent 0.944. But with hints, it collapsed to 0.642 — the lowest score of any model.

MiniMax M1: high volume, hint struggles

Consistently produced the most cards per case. Base generation quality scored 0.854 on grounded evals. But like GPT, it struggled with hints (0.711), and the Publicis Groupe case had 12 out of 25 cards flagged.

Gemini 3 Flash Lite: quality ceiling

Produced the fewest flagged cards per case when generating at low volume. But when pushed to higher output via prompt changes, LLM judge failures appeared on four out of 10 cases.

Full Results Summary

| Model | Ungrounded | Grounded | Grounded + Hints | Review Pass | Avg Issues |

| Gemini 3 Flash | 1.000 | 0.938 | 0.936 | 7/7 | 1.3 |

| GPT 5.4 Mini | 0.986 | 0.944 | 0.642 | 0/7 | 7.1 |

| MiniMax M1 | 0.971 | 0.854 | 0.711 | 1/7 | 6.1 |

| Gemini 3 Flash Lite | 0.943 | 0.800 | 0.800 | 3/7 | 2.7 |

What we learned

Evals are the foundation, not a nice-to-have. Before evals, every pipeline change was a judgment call. After evals, every change had a number attached. The decision to drop the review-update step — which felt risky and counterintuitive — was obvious once we saw that it lowered quality by 15–30% while tripling generation time.

More steps don’t mean more quality. The generate-review-update loop was our most “sophisticated” pipeline. It was also our worst-performing one. A single well-crafted generation prompt outperformed it on every metric: quality, speed, and cost.

Hints are the hardest generation task. Base flashcard generation is largely a solved problem across modern LLMs. All four models scored above 0.85 on grounded evals without hints. But hint generation separated the field dramatically — a gap of 0.294 points between the best and worst model.

Model selection should be task-specific. GPT 5.4 Mini excels at structured data extraction and schema compliance. But it’s the wrong pick for pedagogical hint generation. The “best model” depends entirely on what you’re measuring.

Prompt engineering > pipeline engineering. We invested weeks building a three-step agentic pipeline. A few days of prompt iteration on one-shot generation outperformed it. Before you add complexity to your architecture, exhaust the potential of your prompt.

The best pipeline isn’t the most sophisticated one. It’s the one your evals say produces the best cards.

We now run our eval suites on every prompt change, every model update, and every pipeline experiment. The numbers don’t lie, and they don’t get tired. They’ve become the single source of truth for how we deliver educational content — and they’ve made us faster, cheaper, and better at it.